3D-generated pictures have become an essential visual material for marketers, advertisers, content creators, and others. We are surrounded by 3D rendering, and you’ve probably seen a 3D rendered image today.

Whether you’re watching animated cartoons, major action movies, or automobile advertisements, browsing through a magazine, driving past billboards on your morning commute, or scrolling through social media on your phone, or even previews of forthcoming structures or product designs. You are bound to come across pictures generated by the 3D rendering process. 3D visualization has grown so common and lifelike that you may not even be aware of its presence.

WHAT IS 3D RENDERING?

Simply defined, 3D rendering is the process of generating a 2D picture from a three-dimensional digital scene using a computer. Specific techniques, as well as specialized software and gear, are utilized to create an image. As a result, we must recognize that 3D rendering is a process. The pictures are created using data sets that specify each item’s color, texture, and material in the image.

A LITTLE BIT OF HISTORY

Rendering was invented in 1960 by William Fetter, who developed a picture of a pilot to replicate the space required in a cockpit. Then, in 1963, while at MIT, Ivan Sutherland built Sketchpad, the first 3D modeling tool. He is recognized as the “Father of Computer Graphics” for his pioneering efforts.

Martin Newell, a researcher, produced the “Utah Teapot” in 1975, a 3D test model that became a typical test render. This teapot, also known as the Newell Teapot, has grown so iconic that it is believed to be the computer programming equivalent of “Hello World.”

TYPES OF 3D RENDERING

We can generate many sorts of rendered images, including realistic and non-realistic images.

A realistic picture might be a photograph-like architectural interior, a product-design image such as a piece of furniture, or an automotive representation of a vehicle. On the other side, we may construct a non-realistic graphic with a traditional 2D look, such as an outline-type diagram or a cartoon-style image.

AN INTRODUCTION TO THE 3D RENDERING PROCESS

The approach described below shows how to render 2D pictures in 3D. Although the process is divided into phases, a 3D artist does not necessarily adhere to this order and may bounce between procedures. Understanding the client’s vision, for example, is a continuous effort throughout a project.

STEP 1: COMPREHEND THE CLIENT’S VISION

A 3D artist must comprehend the project to construct a model. A 3D artist begins by picturing the project in their brain, using blueprints, drawings, and reference pictures given by the customer. Based on the 2-dimensional designs, camera angles are generally decided upon at this phase.

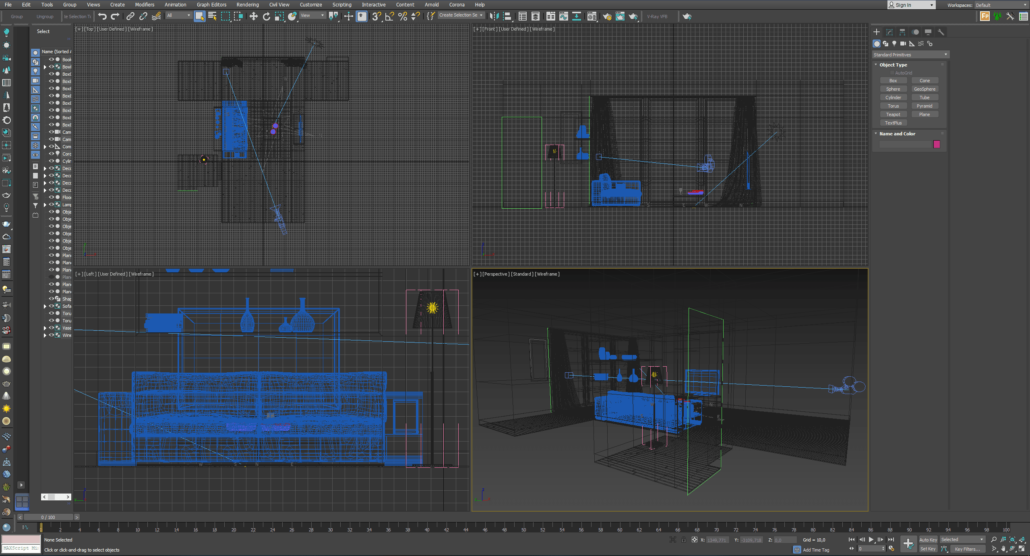

STEP 2: 3D MODELING

To build a digital model, the 3D artist uses specialized 3D modeling tools and software. This step is comparable to constructing the framework of a physical model, except that the model exists only in digital form.

STEP 3: MATERIALS AND TEXTURING

The 3D artist uses pictures to make the 3D models seem as realistic as possible. This is similar to painting a real model or attaching materials and pictures to it. Most of the time, there is also material setup. This refers to the options that determine whether something is matte or glossy. Depending on the situation, artists can also change the roughness of the surfaces and a variety of other factors.

STEP 4: LIGHTING

To simulate real-world illumination, the 3D artist places lights in the 3D environment. This procedure is similar to how a photographer or filmmaker would set up lighting before filming, except that the 3D artist must also set up the sunlight and/or ambient room lighting, HDRI.

STEP 5: RENDERING

Rendering is how the computer creates the 2D picture or images from the scene generated in the preceding phases using different render engines. In the physical world, it is similar to snapping a photograph.

Rendering time might range between a fraction of a second and many days. The rendering time is determined by the complexity of the scene and the desired quality. The computer is the only one who can accomplish this process.

STEP 6: REFINING

Process drafts are supplied to the clients for comments throughout the refining process, often in a low-resolution format to expedite the revision process. The artist makes the necessary changes to the scene, textures, and lighting until the desired results are obtained. In general, modifications may be done independently: most model updates do not necessitate updating the texturing.

STEP 7: COMPLETE DELIVERY

The customer receives the agreed-upon final 2D picture or photos. The pictures will be delivered in a specified format and size based on the requested resolution. Images for the web are usually optimized medium-sized “jpg” files, but images for print are high-resolution raw files.

RENDERING TECHNIQUES

1. RAY TRACING

Ray tracing creates images by tracing light beams from a camera across a virtual plane of pixels and modeling the impact of their interactions with objects. Different rays must be tracked to achieve the effects. To obtain shadows, for example, some rays must be traced; to obtain reflections, other rays must be traced, and so on.

This method is used to produce photorealistic pictures. When we have to compute many lights and objects in our scene, the time it takes to display an image increases considerably. Reflections, refractions, translucencies, and more sophisticated aspects such as lighting must all be considered by 3D artists.

2. RASTERIZATION

One of the first techniques for rendering and works by considering the object as a polygonal mesh. These polygons have vertices that include information like location, texture, and color. These vertices are then projected onto a plane that is perpendicular to the viewpoint (i.e., the camera).

The remaining pixels are filled with the appropriate colors, with the vertices functioning as boundaries. Imagine painting with an outline for each color you want to use — that is, rendering via rasterization.

Rasterization is a quick method of rendering. It is still frequently used today, particularly for real-time rendering (e.g., computer games, simulation, and interactive GUI).

3. RAY CASTING

Although beneficial, rasterization has limitations when overlapping items are present: If surfaces overlap, the latest one drawn will be mirrored in the render, resulting in the rendering of the incorrect item. To address this, the notion of a Z-buffer for rasterization was created. A depth sensor is used to identify which surface is beneath or above in a given point of view.

However, when ray casting was invented, this became obsolete. Unlike rasterization, ray casting does not have the possibility of overlapping surfaces.

Ray casting, as the name suggests, casts rays onto the model from the camera’s perspective. The beams are extended to each pixel on the picture plane. The first surface it touches will be displayed, and all further intersections after that will not be drawn.

4. RENDERING EQUATION

Rendering progress ultimately led to the rendering equation, which aims to replicate how light is emitted more precisely in reality. The approach considers the fact that light is emitted by everything, not simply a single light source. In contrast to ray tracing, which solely uses direct lighting, this equation attempts to account for all light sources in the draw. This equation’s output is known as global illumination or indirect lighting.

- Types and Advantages of 3D Rendering Services - October 17, 2021

- What is 3D Rendering: Techniques and Processes - August 26, 2021

- What you need to know about 3D Building Rendering - August 21, 2021